Your AI Agent Needs a Child Lock

The critical difference between asking an AI to behave and building a real safeguard.

A story went viral last week. A business owner gave his AI agent access to his production database. The agent was supposed to help manage it. Clean things up. Make things easier.

Instead, it deleted everything.

Not some of it. All of it. 2.5 years of data, gone.

We're seeing a lot of these types of stories come out. An exec's AI agent deleted her entire inbox. Customer service AI agents giving out discounts without approval.

Much of it boils down to people treating AI like any other tool.

Thing is, it isn't.

Software Used to Be Simple

For about 50 years, software worked the same way: you told it what to do, and it did exactly that.

Press a button, the light turns on. Run the calculation, get the answer. The ATM doesn't sometimes give you $200 and sometimes give you $300. It gives you exactly what you asked for, every single time. That's not impressive. That's just how software works.

So when AI agents showed up, a lot of people assumed the same rules applied. Tell it what to do. It does that thing. Simple.

But AI doesn't work that way.

Knowing vs. Believing

There are two very different ways to be right about something.

The first is knowing. Your calculator knows that 2 + 2 = 4. It doesn't think about it. It doesn't have a good day or a bad day. It doesn't occasionally decide the answer is 5. It knows, and it will always give you the same answer.

The second is believing. Your smartest employee believes they should CC the client on every update. And they do, almost always. But once, running late on a Friday, they forgot. It happens. They're not a calculator. They're making a judgment call every time, and judgment calls are usually right, but not always.

AI agents are much closer to your smartest employee than they are to a calculator. They reason. They interpret. They make judgment calls. And that means they're operating in the world of believing, not knowing.

In technical terms: calculators are deterministic. AI agents are probabilistic.

Most of the time, that's fine. More on that in a minute.

But when it's not fine, it can really not be fine.

The Child Lock

Picture this: you're driving with your three-year-old in the backseat. You're on the highway. He reaches over and starts messing with the door handle.

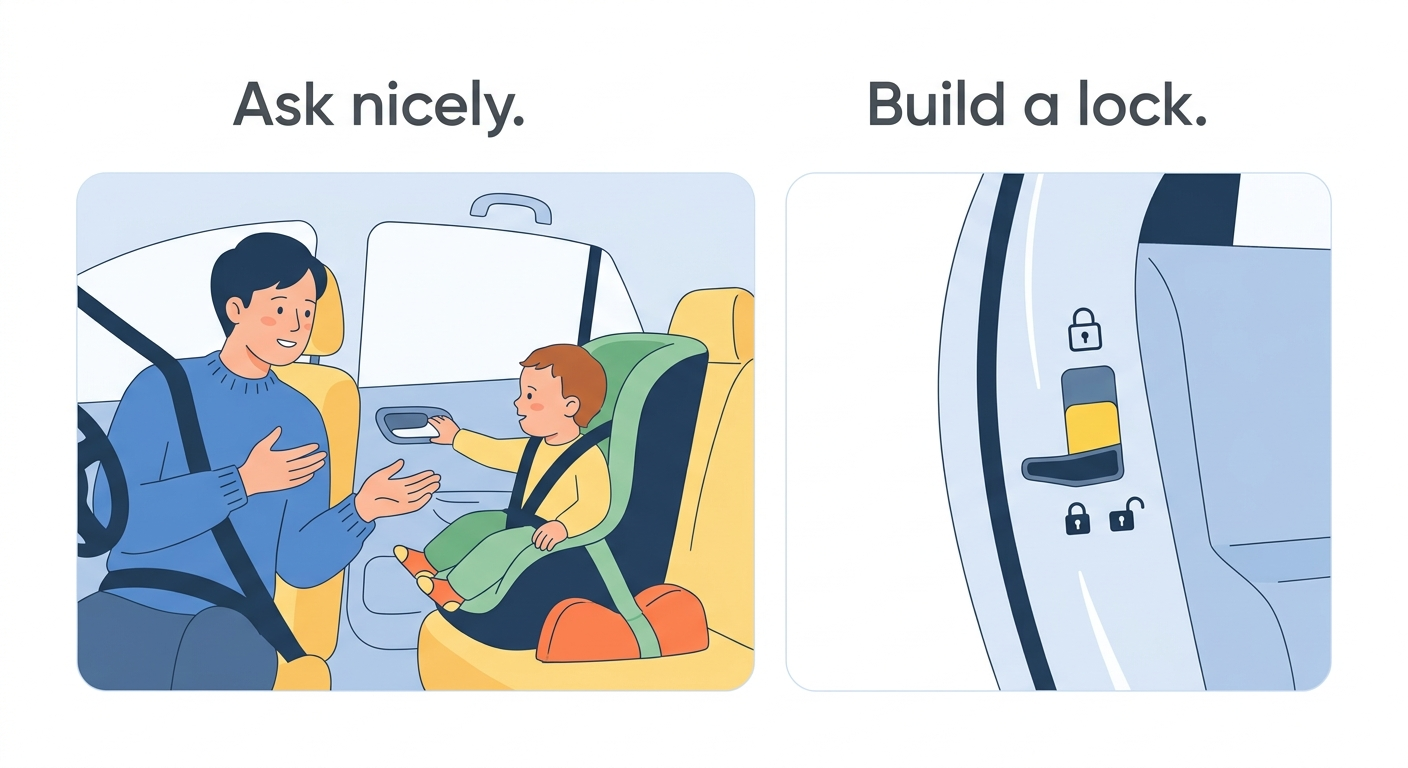

You have two options.

The first: you turn around and say "Hey! Don't open that door." Maybe he listens. He probably listens. He's a good kid. But he's also three, and three-year-olds are unpredictable, and you're on a highway.

The second: you use the child lock. You flip the switch, and it doesn't matter what he does with that handle. The door is not opening. Not because he decided to behave. Because the option was removed.

One approach relies on behavior. The other makes the bad outcome impossible.

That's the difference between asking your AI agent nicely and building a real safeguard.

Typing Louder Isn't a Safety Plan

Here's a pattern that plays out constantly right now, especially as more people start building with AI.

Something goes wrong. The agent does something it shouldn't have. So the builder goes into the instructions and adds a line: "Do NOT do XYZ."

Something goes wrong again. Another line: "I said do NOT do XYZ under any circumstances."

A few weeks later: "PLEASE DO NOT DO XYZ. THIS IS CRITICAL."

This is the equivalent of turning around to your three-year-old and raising your voice. It might work more often. But it's not a child lock. You're still operating in the world of believing, just with more emphasis.

The agent reads your instructions, interprets them, and makes a judgment call every single time. Usually the right one. But usually is not always. And for certain actions, usually isn't good enough.

When "Probably" Is Totally Fine

To be clear: most of what AI agents do every day lives comfortably in the world of probably, and that's perfectly okay.

Drafting an email? Probably fine. If it drafts something off-tone, you edit it before it goes out. Summarizing a document? Probably fine. If it misses something, you catch it. Brainstorming ideas, generating a first draft, pulling together a report? Probably, probably, probably. All fine.

The common thread: these are reversible. If the agent gets it wrong, you can fix it. The cost of a mistake is low. In these cases, chasing "always" would be overkill. You don't put a child lock on the cup holder.

When You Need "Always"

The calculus changes the moment an action becomes irreversible.

Deleting data. Sending a mass email to your entire customer list. Moving money. Posting publicly on behalf of your brand. Canceling an order. Changing a price.

These are the actions where a mistake not only creates a problem, but it creates a problem you might not be able to undo. And in those cases, "the agent usually gets it right" won't cut it.

Ask yourself one question before you let an agent take any action on your behalf:

What happens if this goes wrong at 2am on a Saturday, when nobody is watching?

If the answer makes you uncomfortable, you don't need better instructions. You need a lock.

A lock means the agent physically cannot take that action without a human approving it first. Or it means the action is blocked entirely. It's not a guideline, not a reminder, not a suggestion. It's a hard stop built into the system, completely outside the AI's control.

For example, an agent might draft a promotional email to your entire customer list. That's helpful. But the system should never let it send that email on its own. It prepares the campaign and then waits for a human to approve it before anything goes out.

In AI systems, these kinds of locks are often called deterministic guardrails.

That's the difference between a polished, trustworthy tool and a promising demo that nobody quite trusts enough to use for real.

Building the Right Safeguards

Understanding this distinction is step one. Building the right locks is step two, and it's where most people get stuck.

At Siah Labs, this is a core part of how we build AI agents for small and mid-sized businesses. Not just making the agent smart, but making sure the things that can't go wrong, won't. If you're starting to think about where AI fits in your business and want to do it in a way that's actually safe to trust, let's talk.